In June 2007, Apple released the first iPhone, and people experienced the magic of sensors, as they shifted the phone's display from portrait to landscape mode with a flick of the wrist. The enabling technology behind this modern parlor trick is sensor fusion, which combines data from multiple sensors to enable devices to better understand the world around them and to enhance human-machine interactions.

Figure 1. Sensor fusion enhances mobile, wearable, and Internet of Things devices by providing an all-seeing contextual awareness while preserving battery life. (Courtesy of Audience Inc.)

“Sensors on their own provide limited data, but if you combine data from multiple sensors, you can create a more productive ‘big picture’ of an event, where you bring location-based services and context awareness to bear," says Steve Whalley, Chief Strategy Officer, MEMS Industry Group (MIG). “The technology enhances the user interface by providing a perspective more meaningful than a single data point possibly could. So in this case, the whole is greater than the sum of the parts."

Sensor fusion technology has advanced significantly since those early days, but it is still in the early stages of development. As the Internet of Things takes shape and mobile and wearable technologies mature, electronic device designers bring fusion technology to bear on a broader range of applications. This growth spurt has, in turn, created new challenges for the technology.

A Question of Vision

The first of these challenges is conceptual in nature. People have thought of sensor fusion in terms of motion sensors—accelerometers and gyroscopes—but if you think of it as software that manages anything that can be sensed, the technology casts a broader shadow.

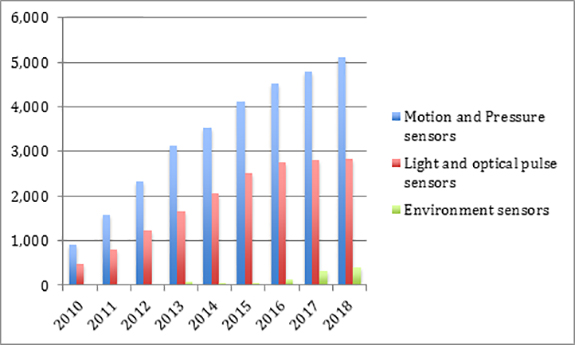

Bringing in new sensor types introduces a wealth of untapped information (Figure 2), but it requires that developers answer a whole new set of questions. “We are adding humidity sensors that can determine whether the device is indoors or outdoors, optical ambient light sensors that help the device understand whether it is in a light or dark environment, optical proximity sensors that measure the distance in the front of the phone, ultrasonic sensors to understand where your hand is relative to the phone, and gas sensors to measure volatile organic compounds in the environment," says Kevin Shaw, Director of Business Development at Audience. “There are even multispectral cameras coming online that gauge environmental conditions. The challenge is understanding the information they provide and discovering how the information can be used to provide value."

Figure 2. Although early sensor fusion systems relied almost solely on motion sensors, market forces are driving vendors to harness the power of more types of sensors, such as medical, fitness, and environmental sensing devices. (Courtesy of IHS Inc.)

Figure 2. Although early sensor fusion systems relied almost solely on motion sensors, market forces are driving vendors to harness the power of more types of sensors, such as medical, fitness, and environmental sensing devices. (Courtesy of IHS Inc.)

When designers deploy sensors to platforms beyond smartphones and tablets, understanding the value proposition becomes even more complex. Some smart home, building automation and industrial devices are stand-alone systems that are not attached to a user, so it's important to understand the use case of the device and the environment in which it operates. “One interesting application of sensor fusion for emerging smart home and industrial applications is presence detection," Shaw says. “Being able to determine when a person has walked up to a device and is preparing to interact with it allows the device to wake up."

“From a pure functionality point of view, features like voice wake-up, context awareness and location-based services are becoming important," says Reza Kazerounian, senior VP and GM of the Microcontroller Business Unit at Atmel. “We are, however, only beginning to see how these things will mature and how they will ultimately be used. There are a lot of ideas about how these new features can be used to provide commercial services."

A Balancing Act

In addition to adding new sensor types to the fusion mix, developers must also find a way to reconcile two seemingly incompatible demands: make draconian cuts in power consumption and deliver always-on technology to support context awareness, gesture recognition, and indoor navigation. Consumers expect the latest generation of mobile and wearable devices to have an all-seeing awareness of the environment in which they operate and an understanding of the needs of the user. The catch is that the device must be able to do this without draining the battery—the sole source of energy for many devices.

Designers are seeking to solve this conundrum by turning to various forms of multi-processor integration. The challenge is to find the right balance.

Tailoring the Hardware

To achieve optimum power efficiency, system developers must limit the time a device's display, application processor, radios and GPS are in an active state. At the same time, the system must allow specific sensors to remain in an always-on state.

To accomplish these goals, designers offload as many functions as possible from the power-hungry application processor, allowing specialized low-power processors called sensor hubs to process sensor data. Some fusion system developers have taken this approach one step further by adding CPUs that process only specific types of sensor data. These processors combine the output of similar sensor types, such as motion, create a picture of a common event and pass the data on to the sensor hub. The hub aggregates all of the data from the various sensors and sends a compressed data stream to the application processor, which performs complex, computationally intensive functions.

Advocates of this approach contend it is the most power-efficient architecture. “The closer you perform the processing to the point where the data is generated, the lower the power consumption," says Sameer Bidichandani, senior director of product marketing for InvenSense.

Recently, two leading fusion technology providers—InvenSense and Audience—have released products embodying this approach. Both products include specialized sensor data processors, supported by a tailored software framework.

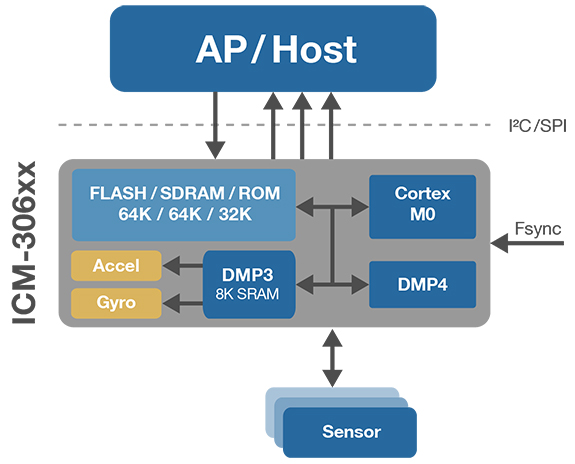

InvenSense's ICM-30630 consists of an ARM Cortex-M0 microcontroller and InvenSense's low-power DMP3 and DMP4 Digital Motion Processors (Figure 3). The DMP3 offloads all motion processing tasks, while the DMP4 offloads computationally intensive operations. Together, the ICM-30630 sensor software framework and Cortex-M0 handle sensor management tasks via an integrated real-time operating system, supporting the acquisition and processing of data from an integrated 6-axis gyroscope, an accelerometer and external sensors, enabling the system to deliver always-on functionality. The unit also provides a programmable open-platform area, where OEMs can add custom software features.

Figure 3. InvenSense’s ICM-306 programmable tri-core sensor hub reduces energy consumption by shifting functions from the power-hungry application processor to low-power processors tailor-made to manage sensor functions. (Courtesy of InvenSense Inc.)

Figure 3. InvenSense’s ICM-306 programmable tri-core sensor hub reduces energy consumption by shifting functions from the power-hungry application processor to low-power processors tailor-made to manage sensor functions. (Courtesy of InvenSense Inc.)

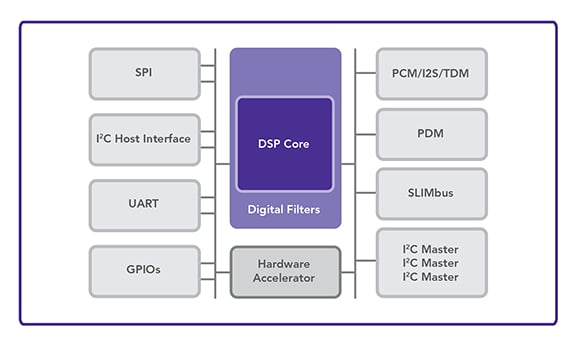

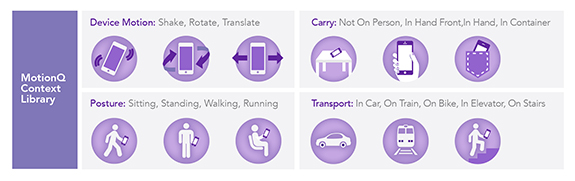

Audience's N100 multisensory processor combines MotionQ and VoiceQ technologies (Figure 4). MotionQ is built around the MQQ100 low-power motion processor, enabling gesture and context awareness (Figure 5). VoiceQ includes the eS700 series processor and an always-on voice activity detector to continuously monitor for voice signals. Once voice activity is detected, VoiceQ technology compares the incoming signal with pre-stored key phrases, waking the device and indicating the user's intent to interact with it. During this process, all components remain in sleep mode except the N100 processor and the digital microphones. The voice activation feature adds an extra dimension to the power-saving measures, and by combining sound and motion data, the N100 provides micro-location information, removes background noise and improves the accuracy of speech-recognition engines.

Figure 4. Audience’s N100 multisensor processor combines voice and motion sensor data from the user and environment to enhance contextual awareness and provide a more natural user interface. (Courtesy of Audience Inc.)

Figure 4. Audience’s N100 multisensor processor combines voice and motion sensor data from the user and environment to enhance contextual awareness and provide a more natural user interface. (Courtesy of Audience Inc.)

To integrate all of the components making up this tri-layer processing architecture, the designers have adopted the system-on-chip packaging approach. This, advocates contend, minimizes fusion systems footprint, optimizes performance, and further improves energy efficiency.

Figure 5. Audience’s N100 includes the MotionQ library, which is a software platform that includes advanced algorithms, a power-conscious architecture, and a high-level application programming interface. (Courtesy of Audience Inc.)

Figure 5. Audience’s N100 includes the MotionQ library, which is a software platform that includes advanced algorithms, a power-conscious architecture, and a high-level application programming interface. (Courtesy of Audience Inc.)

There is an irony to the rise of off-loading engines. “We have spent the past three decades using monster CPUs,” Shaw says. “Now we are in this odd position with mobile devices where we want to turn off these powerful CPUs as quickly as possible. But we still expect the devices they reside on to have this all-seeing awareness without draining the battery. So we have come to this strange point where we are starting to pull silicon out of systems that have already been integrated."

Becoming More Human

Sensor fusion continues to evolve, as developers combine its strengths with machine learning and aspire to functionality mimicking the processes of the human mind. “We are seeing the evolution of the algorithms over time — being able to understand a broader range of intent and context than we could ever imagine before," says Audience's Shaw. “Machine learning algorithms identify patterns and enable you to solve a much broader class of problems than could be accomplished with old-style deterministic code."

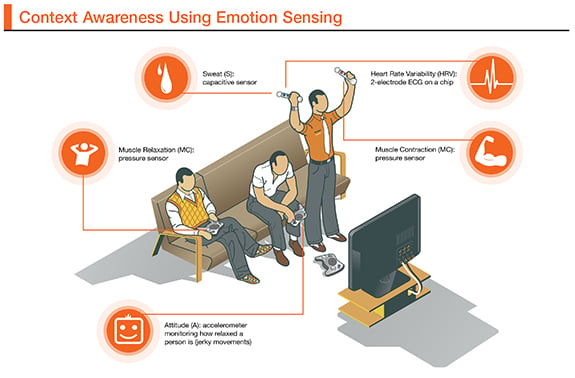

Such advances in fusion technology are imagined in a white paper titled “The Role of Sensor Fusion and Remote Emotive Computing in the Internet of Things." Here, Freeescale considers how the technology could provide new classes of services by detecting emotions through sensor measurements of physiological states. These might include measuring muscle relaxation via a pressure sensor, heart rate variability via an ECG chip, sweat via a capacitive sensor, attitude via an accelerometer monitoring a person's state of relaxation (jerky movements vs. steady hands) and muscle contraction via a pressure sensor. (Figure 6)

Figure 6. Future sensor fusion systems may use emotion sensing to expand context awareness. (Courtesy of Freescale Semiconductor Inc.)

Figure 6. Future sensor fusion systems may use emotion sensing to expand context awareness. (Courtesy of Freescale Semiconductor Inc.)

“Mobile and exercise devices have focused on only one facet for what information sensor data contains," says Ian Chen, director for marketing of systems architecture, software and algorithms at Freescale Semiconductor. “Advances in sensor data analytics are enabling a new generation of smart devices and appliances, creating business efficiencies with new real-time data, and improving user interfaces with remote emotive computing."

A quick look at the products coming on the market today makes it clear that sensor fusion technology is still evolving. Equally clear is the fact that its potential will be defined as much by imagination as by technological advances.

Questions or comments on this story? Contact peter.brown@globalspec.com

Related links:

News articles:

Renesas Retains Top Spot in Automotive Chip Vendor Ranking

MEMS Rankings: Apple Design Wins Keep Bosch on Top

DMQ Tips MEMS for Industrial Applications

Hua Hong, Lexvu Claim Smallest MEMS Barometer

Handsets, Tablets Drive Ambient Light Sensor Market