Supporting the latest 4th Generation cellular air interface technology known as Long Term Evolution (LTE) might be the latest must have on a core chipset supplier's price list. However, simply being able to claim LTE support, meeting 3GPP requirements or even passing mobile network operator certification does not necessarily translate to the same consumer experience in the end. These certifications and requirements do represent at least a minimum acceptable level of capability but do not always assess the presence of any further optimizations and innovations.

Due to the critical role that the modem plays in delivering the use cases and services that consumers have come to expect by using devices such as smartphones and tablets, it is critical to understand where LTE modem capabilities can and should extend past minimum requirements and how they ultimately affect consumer experience ranging from website downloads to video playback and social networking to roaming and battery life. To this end, IHS will be delivering a series of LTE Modem Insights to further explore potential opportunities for differentiation. This article is the first of this series.

"Basic" support for LTE is not so basic

As the adage goes, one must learn to walk before learning to run. Before assessing more advanced capabilities such as carrier aggregation, VoLTE and co-existence optimization, let us first assess the support of LTE at its most basic functionality – namely, mobile broadband data connectivity on one contiguous channel. Certification and IOT requires the modem to perform certain steps to acquire the base station, authenticate, request and receive radio resources, transmit and receive data, handover from one site to the next and manage power levels within network parameters. However, simply demonstrating these capabilities does not an optimized modem make and begs a few questions such as:

• How fast does the modem perform these steps?

• How does the modem operate in low signal strength environments?

• How efficient is the modem at delivering optimum performance while maintaining battery life?

At its core, these questions really have to do with 2 modem design elements: its protocol stack and peripheral algorithms as well as its RF architecture.

The protocol stack and peripheral algorithms are essentially the "programs" which run on the baseband of the modem chipset which dictates how and when the modem should communicate with the network as defined by the supported air interface technology—in this case LTE—in order to perform functions like base station acquisition, authentication, radio resource requests and traffic channel acquisition, amongst others.

One can think of these air interface technologies (like LTE, HSPA+ and EvDO) as different languages with which modems or the devices in which they are integrated communicate over the air with specific networks. Just like different individuals have different skill levels when speaking different languages like English, Chinese or Spanish, different modems, depending on how they are designed have different performance with regards to how efficiently they communicate over the air, not only in ideal conditions but also in the presence of noise, interference and fading.

When designed properly the protocol stack allows not only for rapid processing of control elements called layer 2 and 3 messages that enable the aforementioned functions, but also mitigates the effects poor signaling algorithms could impose on the consumer's experience of the data call.

For example, one element that all LTE modems are required to support is the handing over of calls between the LTE network and the legacy 3G network. A modem might demonstrate that it can do this in the lab or in ideal conditions which would allow it to pass a certification test.

However, in the field, in cell boundary conditions where the LTE signal is potentially just as bad as the 3G signal, if the algorithm for this function is not optimized, it could cause the modem to simply bounce back and forth between technology modes and never establish a traffic channel causing the consumer experience to degrade.

Similarly, should the algorithm for requesting the new 3G channel be called upon too late and the signal with the LTE channel is lost prior to establishing the new traffic channel, the effect on consumer experience would be akin to someone disconnecting the Ethernet cable while in the middle of trying to stream the end of a championship football match on your PC—an unpleasant experience to say the least.

The RF front end architecture is the section of the core chipset which handles the conversion of the information that is sent or received with our mobile devices between digital and analog states and is responsible for the physical transmission and reception of the radio frequency signals which carry our voice or data packets over the air. RF architecture design utilizes components such as:

• RF transceivers

• power amplifiers

• switches

• duplexers

• low noise amplifiers

• filters

• antenna tuners

• envelope trackers

When executed properly, the ideal objective for the RF architecture design is to use the least amount of power during transmission and reception while still ensuring the best speed and latency possible for the current channel conditions.

Transmitting data is the most power hungry function a modem can execute. By minimizing the amount of time a modem is actively transmitting, the RF architecture can help deliver improved consumer experience at a fraction of the battery life. In order to accomplish this, the RF architecture must minimize any self inflicted noise and interference generated by its own components while also dealing with or otherwise offsetting the effects of external noise and interference inherently present in a lossy transmission medium such as air. System level design and the inclusion of capabilities such as antenna tuning and envelope tracking also help in achieving this ideal.

With both of these design elements, like with most designs that have limited resources at their disposal, making the right trade off decisions to optimize the design is critical. In this case, due to the real time nature of voice and data communications, modems have limited time—often in microseconds—to make the algorithmic decisions mentioned above, as well as limited power with which to do it.

An example of a trade off decision that must be made correctly is how much and which functionality should be done in hardware versus in software. The correct partitioning of tasks and functionality between these two implementation methods is critical in achieving optimized performance within the time and power boundaries of the environment in which LTE modems must operate.

In order for any modem designer/supplier to have a chance at making the correct decisions in any variety of situations, the modem supplier must have the ability to not design their products in isolation. This comes with having an intimate understanding of the ecosystem in which modems and the devices that use them operate. The more modem suppliers understand not only the smartphones or the tablets into which the modems will be integrated but also the applications and use cases supported by those devices, the radio access and core network elements within the wireless network, as well as the wired protocols, the servers and data centers in the cloud with which the devices must interact, to name a few, the more instinctive design trade off decisions can occur to the benefit of the end user.

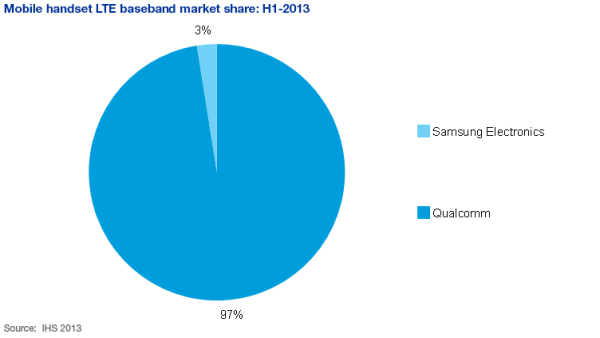

The first commercially available LTE devices that generated production volumes hit the market in 2009 with Telia Sonera's LTE launch in the Nordics. Currently, the majority of LTE smartphones available in the market utilize a Qualcomm chipset. Other suppliers that also have solutions are Samsung, Intel, GCT Semiconductor, nVidia and Broadcom. However, not all of these modem suppliers have design wins that have actually ramped to production volumes. The chart above is IHS' estimate of LTE modem market share by shipment volume for the first half of 2013.

Putting the "Evolution" in long term evolution

Looking forward to the next couple of years, IHS expects the competitive landscape for LTE modems to continue to be a hotly contested area as other suppliers attempt to close the gap with Qualcomm by evolving their solutions to attempt to address at least some of the differentiated performance mentioned above. More modem suppliers will also be entering the market as LTE continues to achieve scale. Some of those expected to enter the market are companies like MediaTek from Taiwan.

A critical factor to consider with LTE however is that this initial support of LTE data calls that was assessed earlier in the article is only the tip of the spear in terms of future enhancements to LTE. Even as "basic" LTE functionality starts to achieve critical mass, solutions are already being designed for the next set of evolved capabilities such as FDD/TDD and band optimized architectures, VoLTE and carrier aggregation – all of which come with their own set of challenges and opportunities for differentiation above minimum operating standards and all of which IHS will explore and assess in the following installments of this series.

Related stories:

- IHS Technology Telecommunications Page

- Envelope Tracking Pioneer on Brink of LTE Success

- MWC: Faster Data Speeds Fuel the Connected Car

- MWC: Those Sexy Parts Called Sensors

- MWC: Smart Living in Smart Cities

- Mobile World Congress Interview Series: STMicroelectronics & the Future of MEMS

- Imagination Launches 'Raptor' Image Processing Cores

- CES: Electronics to Drive Innovation in the Auto Market