Digital cameras, particularly those found in smart phones, have become the de facto technology for capturing images. The technology isn’t particularly new. The first digital cameras were introduced about thirty years ago and the technology had, for the most part, displaced film cameras by the mid-2000s. Stand-alone digital cameras didn’t reign very long though. In 2007 the first generation iPhone was introduced. It had a fixed-focus 2.0-megapixel (MP) camera on the back for taking digital photos. Within a few years the iphone and other smart phone manufacturers had included a second, front facing camera and upgraded the rear camera to around 10 MP.

At that point the discernable image quality started to challenge standalone digital cameras while being much more accessible and convenient. Image processing software, sensors, and even an additional rear camera (dual cameras) were added to further improve image quality. Today an iPhone 7 Plus features two rear cameras, each 12 MP with f/1.8 apertures, one of which is a telephoto lens with 2x optical zoom and up to 10x digital zoom. Also included is a 7 MP front-facing camera. The Samsung Galaxy 8 has a single 12 MP rear camera with 12 MP and f/1.7 aperture and an 8 MP front-facing camera. As a result of the incredible imaging technology in smart phones, standalone digital cameras are pretty much a niche product now with only 1.5% of the camera market (smartphones 98.4% and film 0.1%)1.

At that point the discernable image quality started to challenge standalone digital cameras while being much more accessible and convenient. Image processing software, sensors, and even an additional rear camera (dual cameras) were added to further improve image quality. Today an iPhone 7 Plus features two rear cameras, each 12 MP with f/1.8 apertures, one of which is a telephoto lens with 2x optical zoom and up to 10x digital zoom. Also included is a 7 MP front-facing camera. The Samsung Galaxy 8 has a single 12 MP rear camera with 12 MP and f/1.7 aperture and an 8 MP front-facing camera. As a result of the incredible imaging technology in smart phones, standalone digital cameras are pretty much a niche product now with only 1.5% of the camera market (smartphones 98.4% and film 0.1%)1.

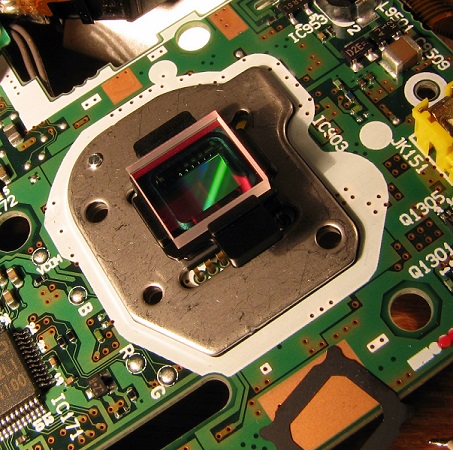

Thus when we talk about digital cameras nowadays, what we are talking about are smartphone cameras. The image sensors in these devices are almost always complementary metal-oxide-semiconductor (CMOS) due to their low power consumption, lower manufacturing cost and ability to integrate analog to digital converters (ADCs) on the sensors themselves. Integrating ADCs on the sensor allows the data to be read very quickly off the sensor while simultaneously reducing noise. All of these features are important advantages if you are trying to maximize smartphone battery life, minimize manufacturing costs and still have an image comparable to CCD image sensors, which were the leading image sensor technology before smartphones. As a sign of the times, a few years back Sony, the largest manufacturer of CCD sensors, announced plans to phase them out by 20252.

So how does a CMOS image sensor in a smartphone work? To understand the image sensor’s role, let’s first understand what happens when you point and click a smartphone camera. Basically, the user will focus the camera lens by selecting the part of the image he or she is interested in, usually by touching a touchscreen showing the image to be taken and allowing the smartphone camera to autofocus. Then the user clicks to take the picture. The light entering the camera lens is analyzed and software determines the optimal aperture and exposure time. The aperture is set, the shutter opens and shut and the light that gets through hits the CMOS image sensor which captures the image and relays it to the camera’s hardware where the image is processed and saved digitally.

As mentioned earlier, the CMOS image sensors found in smartphones currently tend to run about 12 MP. This means the image sensor has about 12 million pixels. Image sensors are large arrays of millions of photo-sensitive sites (pixels). The more pixels, the better the resolution/detail captured in the digital picture. Each pixel has a tiny lens filter over it to increase its effective size (fill factor). The pixels also have a red, green, or blue filter to help determine color. When a photon hits the silicon, it penetrates based on the wavelength, and, if it is absorbed, will create an electron-hole pair where it absorbs. There are thus three places where this electron-hole pair can be formed, either in the p-type bulk, the n-type bulk or the depletion region.

If it is formed in the depletion region, then the electron-hole pair create a drift current. If it is absorbed in the n-type region the hole that is formed diffuses toward the depletion region. This situation is reversed in the p-type silicon, where the electron diffuses. These diffusions each create a current. Thus, the total current in the photodiode is the addition of these two diffusion currents and the drift current, with the amount of current based on how many photons are hitting the sensor and the sensor's area.

If it is formed in the depletion region, then the electron-hole pair create a drift current. If it is absorbed in the n-type region the hole that is formed diffuses toward the depletion region. This situation is reversed in the p-type silicon, where the electron diffuses. These diffusions each create a current. Thus, the total current in the photodiode is the addition of these two diffusion currents and the drift current, with the amount of current based on how many photons are hitting the sensor and the sensor's area.

CMOS image sensors integrate some amplifiers directly into the pixel. This allows for a parallel readout architecture, where each pixel can be addressed individually, or read out in parallel as a group. ADC processing on the sensor converts the signal to a digital signal. The signal is then fed into an image signal processor where the respective proportions of Red, Blue, and Green light are analyzed to determine true incident color. In addition, the image signal processor corrects for lens imperfections, performs signal noise reduction and many other operations to greatly improve the digital image. In fact, increasingly the image signal processor is being asked to do more and more work in order to keep digital images improving without increasing the size of smartphones. Finally, after additional processing and compression the digital image is saved and the user can view the image on the smartphone display.

Smartphones and their CMOS digital image sensors have become the dominant player in consumer photography. Brands such as Apple and Samsung are in an arms race of improving picture (and video) quality while keeping prices and form factor the same. Although the technology of the image sensors has improved, particularly the pixel/cost ratio, the major strides in image quality are being made in the lenses and the image signal processing. Still, the large-scale manufacturing behind CMOS technology in general is what enabled the current explosion in digital camera technological improvements. So it’s worth knowing how they work.

1. https://petapixel.com/2017/03/03/latest-camera-sales-chart-reveals-death-compact-camera/

2. https://petapixel.com/2015/03/01/sony-to-stop-producing-ccd-image-sensors-to-focus-on-cmos-growth/