Virtual Reality (VR) and Augmented Reality (AR) are quickly becoming staples for anything from gaming to training for first responders. While new technology is exciting, it does still have downfalls. One of the major complaints about VR/AR headsets is that they can be challenging to wear even for a short period of time. Eye strain, motion sickness and fatigue are among the most common physical complaints from people who have spent time in the VR environment.

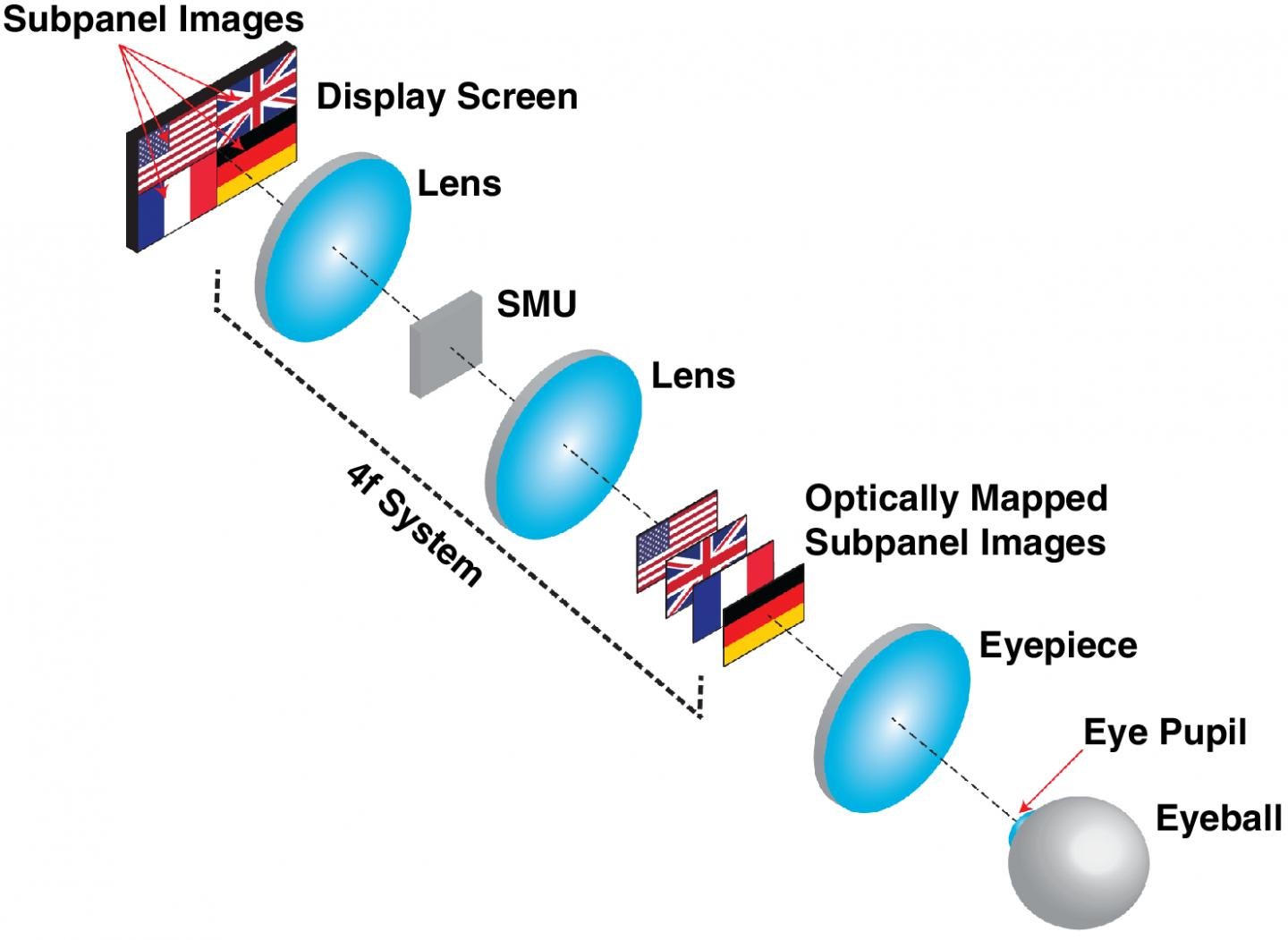

The operating principle of the OMNI three-dimensional display. (Professor Liang Gao, ECE Illinois)

The operating principle of the OMNI three-dimensional display. (Professor Liang Gao, ECE Illinois)

iOptics Lab (Intelligent Optics Lab) at the University of Illinois at Urbana-Champaign has come up with a solution for these physical ailments. Liang Gao, assistant professor of electrical and computer engineering, and graduate student Wei Cui, both part of the Beckman Institute, have teamed up to create a new optical mapping 3D display that makes VR more comfortable for longer wear.

Current 3D VR/AR displays present two images that the user’s brain uses to construct the impression of a 3D scene. This display method can cause eye fatigue and discomfort because of the vergence-accommodation conflict, which is an eye focus problem.

When the eye looks at an object, it points toward the object and the lenses focus on the object. Depending on the distance between the eye and the object, eyes either converge or diverge and the lenses accommodate. Vergence and accommodation automatically work together -- when the eye is presented with a rendered 3D scene, a conflict between the two arises.

Two images that make up stereoscopic 3D images are displayed on a single surface that is the same distance away from the eyes. But these images are slightly offset in order to create the 3D effect. The eyes have to work differently than usual -- converging to a distance that seems further away -- while the lenses focused on an image that is centimeters from the face. This is where the vergence-accommodation conflict arises.

To overcome these stereoscopic limitations, Cui and Gao created an optical mapping near-eye (OMNI) three-dimensional display method. This method divides the digital display into different subpanels. A spatial multiplexing unit (SMU) shifts the subpanel images to different depths with correct focus cues for depth perception. Unlike the offset images from the stereoscopic method, the SMU aligns the centers of the images to the optical access. There is an algorithm that blends the images together, creating a seamless image.

"People have tried methods similar to ours to create multiple plane depths, but instead of creating multiple depth images simultaneously, they changed the images very quickly," Gao said in an OSA news release. "However, this approach comes with a trade-off in dynamic range, or level of contrast, because the duration each image is shown is very short."

The researchers are continuing to work on the display, and are focusing on increasing power efficiency and reducing the weight and size of the headsets.

"In the future, we want to replace the spatial light modulators with another optical component such as a volume holography grating," said Gao. "In addition to being smaller, these gratings don't actively consume power, which would make our device even more compact and increase its suitability for VR headsets or AR glasses."

The report on this research was published in Optics Letters.