The researchers' prototype system was tested on a stack of papers, each with one letter printed on it, and it was able to correctly identify the letters on the top nine sheets.

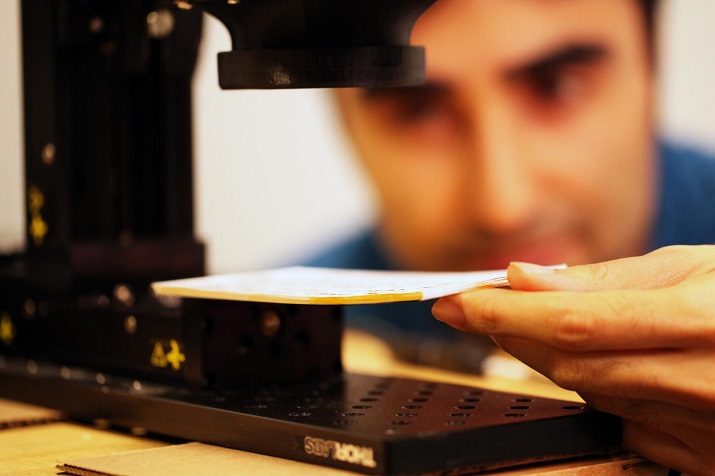

MIT researchers and colleagues are designing an imaging system that can read closed books. (Image Credit: Barmak Heshmat/MIT)

MIT researchers and colleagues are designing an imaging system that can read closed books. (Image Credit: Barmak Heshmat/MIT)

"The Metropolitan Museum in New York showed a lot of interest in this, because they want to, for example, look into some antique books that they don't even want to touch," said Barmak Heshmat, a research scientist at the MIT Media Lab and corresponding author on the new paper. He adds that the system could be used to analyze any materials organized in thin layers, such as coatings on machine parts or pharmaceuticals.

Heshmat is joined on the paper by Ramesh Raskar, the NEC Career Development Associate Professor of Media Arts and Sciences; Albert Redo Sanchez, a research specialist in the Camera Culture group at the Media Lab; two of the group's other members; and Justin Romberg and Alireza Aghasi of Georgia Tech.

To make it happen, the researchers created algorithms that obtain images from individual sheets in stacks of paper, and then teamed up with Georgia Tech researchers who developed an algorithm that interprets the often distorted or incomplete images as individual letters.

"It's actually kind of scary," said Heshmat, in reference to the letter-interpretation algorithm. "A lot of websites have these letter certifications [captchas] to make sure you're not a robot, and this algorithm can get through a lot of them."

The system employs terahertz radiation, the band of electromagnetic radiation between microwaves and infrared light, which has several advantages over other types of waves that can penetrate surfaces, such as X-rays or sound waves.

Currently terahertz radiation is widely researched for potential applications use in security screening, due to the face that different chemicals absorb different frequencies of terahertz radiation to different degrees, and then produce distinctive frequency signatures for each.

In the same way, terahertz frequency profiles can distinguish between ink and blank paper, in a way that X-rays cannot.

Terahertz radiation can be emitted in such short bursts and the distance traveled can be measured from the difference between its emission time and the time at which reflected radiation returns to a sensor— providing more depth resolution than ultrasound technology.

The system works because of tiny air pockets that get trapped between the pages of a book—only 20 micrometers deep. The difference in refractive index, or the degree to which they bend light, between the air and the paper means that the boundary between the two will reflect terahertz radiation back to a detector.

In testing, the team used a standard terahertz camera to emit ultrashort bursts of radiation, and the camera's built-in sensor was able to detect their reflections. From the time the reflections arrived, the MIT researchers' algorithm was able to measure the distance to the individual pages of the book.

At the time, some of the radiation bounces around between pages before returning to the sensor and produces a spurious signal, and the sensor's electronics also produce a background hum. One of the tasks of the MIT researchers' algorithm is to filter out this kind of noise.

Currently the algorithm can correctly infer the distance from the camera to the top 20 pages in a stack, but once it gets past a depth of nine pages, the energy of the reflected signal is so low that the differences between frequency signatures are swamped by noise.

However terahertz imaging is still a new technology, and researchers are working to improve both the accuracy of detectors and the power of the radiation sources to allow for deeper penetration.

Story via MIT.