Years ago, an electronics industry guru attending one of his seminars challenged that notion, asking, “Dr. Deming, I understand your contention that controlling the process can prevent defects and produce only good products, but we’ve tried to implement that idea in our industry—with little success—for decades.” Dr. Deming looked back at his inquisitor and responded, “Yes. Except for the electronics industry. The technology evolves too quickly. You never reach a satisfactory equilibrium.” That caveat, however, does not mitigate the economic cost of testing the final product. Today’s complex products have turned that philosophy into something of a “Red Queen’s Race”—running as fast as you can just to stay even. Printed circuit board (PCB) testing provides a perfect example of both sides of that coin.

Historically the term “printed circuit board” evoked an image of a flat substrate containing components on one or both sides. It fit into an approximately rectangular enclosure and connected to other electronics that allowed the resulting system to execute the functions for which it was designed. With large and (by today’s standards) sparse logic and relatively simple capabilities, the product’s limited flexibility (pun intended) did not impede its ability to perform its job.

As the unrelenting miniaturization of electronics continued, however, the rectangular shape became less and less appropriate. Products like Fitbits do not conform to the conventional model. Initially the solution for those applications comprised rigid boards with ribbon cables or similar interfaces between them. But such connectors add failure points to the circuit, and the cables and wires increase the product's weight. In response, board designers created “rigid-flex” PCBs from rigid and flexible layers. The flexible portions bend so that the entire assembly fits into a smaller space.

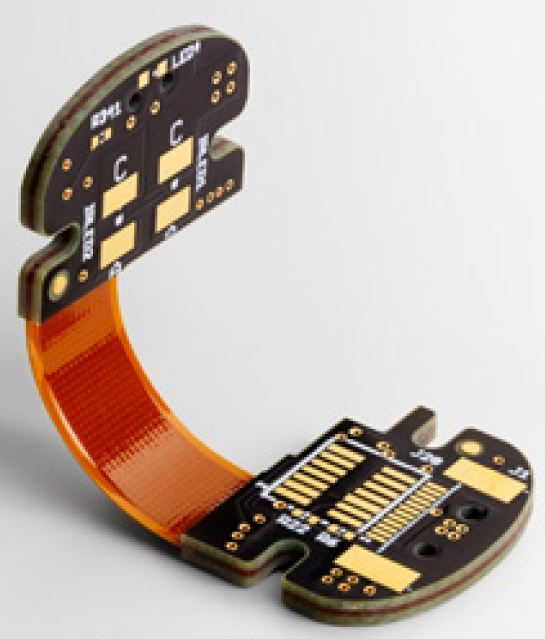

Rigid-flex boards have a rigid part at each end and a flexible section in the middle. Courtesy Sierra Circuits

Rigid-flex boards have a rigid part at each end and a flexible section in the middle. Courtesy Sierra Circuits

The newest versions of these boards contain components on the flexible part of the boards as well as on the rigid sections. In this white paper, Ed Hickey, product engineering director at Cadence, advocates restricting the placement of components and vias in the bend area to avoid excess stress and cracking. For similar reasons, routing must cross the bend-line perpendicular to the direction of the bend. He also cautions against putting pads too close to the bend area because they can peel off.

Although more expensive to manufacture than more conventional designs, rigid-flex boards boast superior reliability, versatility, signal integrity and packaging flexibility, along with relatively small size, making them extremely popular in consumer products such as headphones, smartphones and tablet computers, as well as in devices aimed at military and medical applications. In addition, the flexibility of the center section permits maintaining a reliable connection in boards that move with respect to one another, such as in robots.

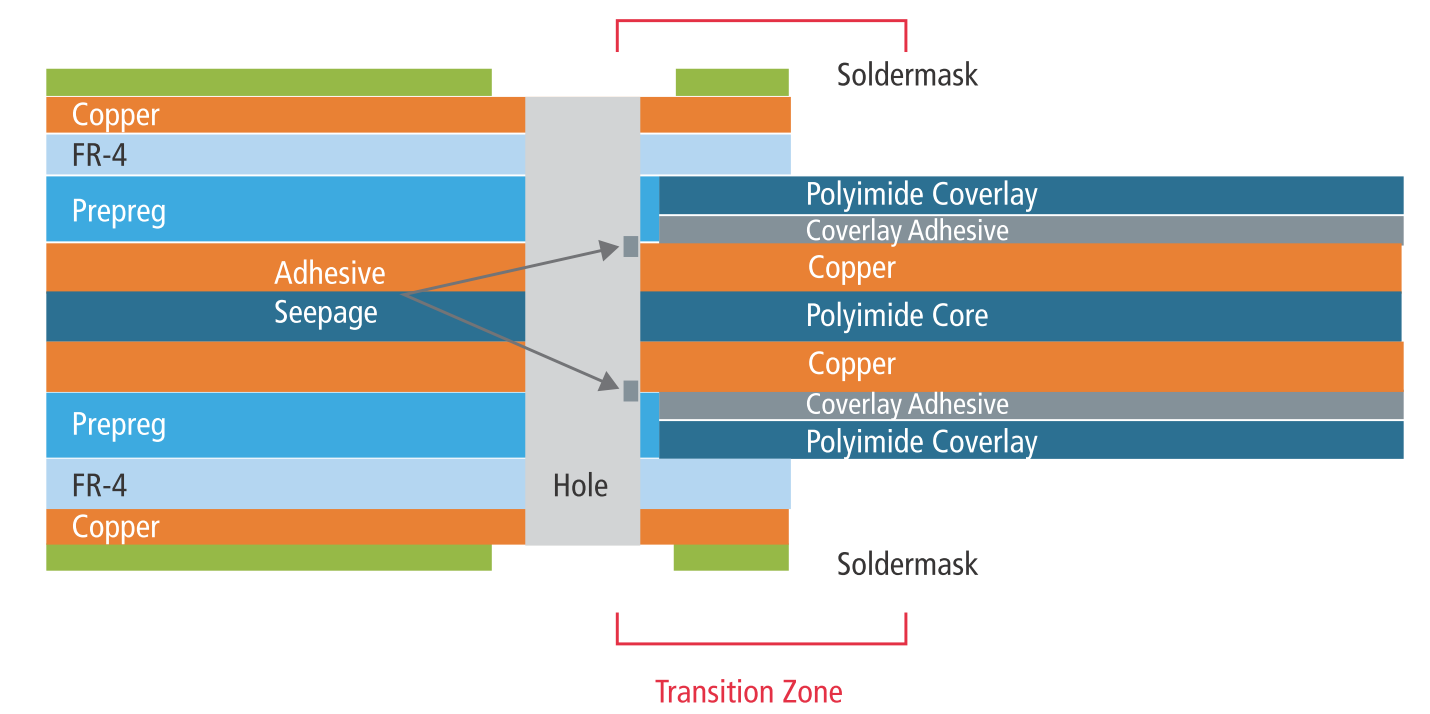

Assembling the boards requires resorting to new materials and design rules. (Source: Cadence)

Assembling the boards requires resorting to new materials and design rules. (Source: Cadence)

Building such PCBs so that they work reliably resurrects Deming’s Dilemma. The boards consist of individual layers bound together. The end sections might include both rigid and flexible layers, while the center section would contain only flexible layers, thereby permitting bending. Each layer must function correctly for the assembled board to work. Comprehensively testing the finished board would prove difficult or impossible. You could exercise at least some of the components and replace any that fail, but repairing even a single layer would likely require disassembling the board—an expensive option if you could do it at all—or scrapping the board altogether. Cost-benefit analyses conducted over many years have concluded that more than a trivial amount of scrap can overwhelm other manufacturing costs, especially with boards as expensive as these.

The only viable strategy? Hickey takes a page out of Deming’s philosophy and recommends verifying (and repairing if necessary) every layer before assembling them into the finished board. After that, a final far-from-comprehensive test will expose component-level failures and other repairable defects, and (hopefully not more than a few) irreparable board-level problems that condemn those boards to the “slush pile.”

Board designers and fabricators generally work at different facilities—or even different companies—dramatically increasing the turnaround time for each iteration of design verification and correction. Minimizing the number of iterations reduces overall development time and costs. Whenever you test works in progress, however, you have to establish design-rule checks, adding circuitry to facilitate that level of test when necessary. Hickey recommends including inter-layer checks that identify errors as early as possible, in order to analyze and repair them at that stage. “Having this capability avoids two frustrating, time-consuming steps.” The extra verification before board assembly shortens the design cycle by reducing the number of expensive and time-consuming test-rework-test iterations.

Design-rule checking can involve either manual or automated tools. Manual techniques rely primarily on the engineer’s skill and experience. More complex situations require more elaborate tools. Automated verification software must incorporate new technologies. As an example, medical, military and other high-reliability applications have introduced many new flex and surface finishes. Automated tools must incorporate those new materials to produce valid verification results.

The increased emphasis on ensuring that individual layers meet performance specifications before manufacturing a finished product brings us a step closer to Deming’s ideal. In the end, however, you still have to throw the switch and make sure that the finished system sings the right tune.

Reference: Automating Inter-layer In-design Checks in Rigid-flex PCBs

And thanks to Jon Titus