Nvidia Corp. and IBM Corp. will collaborate on GPU-accelerated versions of IBM's enterprise software and plan to integrate Nvidia's Tesla GPUs with IBM Power processors.

The partnership, announced this week at the Supercomputing Conference in Denver, aims to deliver technology that maximizes performance and efficiency for high-performance computing (HPC) workloads. Applications that could benefit would lie in scientific, engineering and big-data analytics.

The companies did not indicate how quickly the integration between Tesla GPUs and Power processors would be done. Nor did they specify if the integration would be done monolithically or who would do it. Both IBM and Nvidia recently announced the adoption of processor licensing as a possible business model.

A goal of the collaboration is to enable IBM customers to more rapidly process, secure and analyze massive volumes of streaming data. As such, it can be seen that the IBM-Nvidia combination will compete with the Automata Processor from Micron Technologies Inc., announced at the same conference.

"Companies are looking for new and more efficient ways to drive business value from Big Data and analytics," said Tom Rosamilia, senior vice president at IBM. "The combination of IBM and Nvidia processor technologies can provide clients with an advanced and efficient foundation to achieve this goal."

As a result of the partnership, IBM Power Systems will support scientific, engineering and visualization applications developed with Nvidia's Cuda programming model.

The partnership between Nvidia and IBM builds on the August announcement of the formation of the OpenPOWER Consortium, based on IBM's POWER microprocessor architecture. Consortium members—Google, IBM, Nvidia, Mellanox and Tyan—are working together to address server, networking and GPU-acceleration technology for data centers, IBM said at the time.

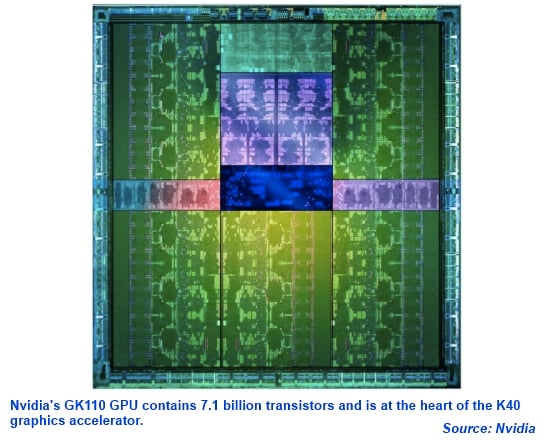

Nvidia also announced the launch of the K40 GPU accelerator module, based on the company's Kepler GK110 graphics processor, which is manufactured by Taiwan Semiconductor Manufacturing Co. Ltd. in its 28-nm CMOS process.

The GK110 provides 2,880 Cuda processing cores, and the K40 board provides 4.29 teraflops of single-precision and 1.43 teraflops of double-precision floating-point math performance. Other K40 features include 12 Gbytes of GDDR5 memory and the introduction of PCIe Gen-3 interconnect support, which accelerates data movement by a factor of two compared to PCIe Gen-2 technology.

Shortly before the Supercomputing Conference, Nvidia also announced an upgrade to its Cuda parallel programming environment. Cuda 6 introduces unified memory across CPUs and GPUs without the need to manually copy data from one to the other, which makes it easier to add support for GPU acceleration in a wide range of programming languages, Nvidia said. Other changes and the introduction of drop-in libraries can accelerate applications by up to a factor of eight, the company claimed.

Related links: