User interfaces for embedded devices are too often just an afterthought during the design process. Simple buttons and maybe a dial or two is what we have been used to for the last couple of decades. Luckily the emergence of touch interfaces in the last several years has brought embedded systems user interfaces closer to those we have come to expect in our cellphone and tablet-dominated world. The next evolution in user interfaces, using gesture support, may breathe new life into embedded functionality and is particularly attractive for factory automation, industrial and medical settings.

Touch Interfaces

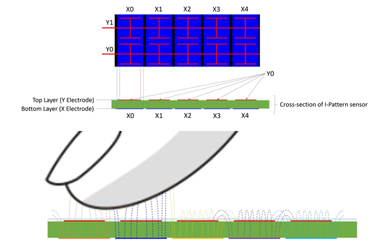

Figure 1: Example Touch Interface (Figure courtesy of Atmel Corporation) http://www.atmel.com/Images/Atmel-42442-QTouch-Surface-Design-Guide_ApplicationNote_AT11849.pdf Traditional touch interfaces have been supported on MCUs for a few years now. Simple sliders, dials, buttons and more complicated controls are easily implemented. For example, Atmel Corporation has a series of devices using the QTouch surface sensor technology that turns a simple printed circuit board (PCB) surface into a touch-based input device. The PCB implementation of QTouch is illustrated in the cross section diagram in Figure 1. The Y electrodes are located on the top layer and the X electrodes are located on a lower layer. The mutual capacitance between these electrodes is sensed in order to determine the location of a finger, as shown at the bottom of Figure 1.

Figure 1: Example Touch Interface (Figure courtesy of Atmel Corporation) http://www.atmel.com/Images/Atmel-42442-QTouch-Surface-Design-Guide_ApplicationNote_AT11849.pdf Traditional touch interfaces have been supported on MCUs for a few years now. Simple sliders, dials, buttons and more complicated controls are easily implemented. For example, Atmel Corporation has a series of devices using the QTouch surface sensor technology that turns a simple printed circuit board (PCB) surface into a touch-based input device. The PCB implementation of QTouch is illustrated in the cross section diagram in Figure 1. The Y electrodes are located on the top layer and the X electrodes are located on a lower layer. The mutual capacitance between these electrodes is sensed in order to determine the location of a finger, as shown at the bottom of Figure 1.

The electric fields present between the X- and Y-electrodes of the sensor are shown in the illustration and are depicted in different colors to indicate the different X-electrodes from which they originate. When the sensor is scanned, each X-electrode is driven one at a time, in sequence and the field lines from one sensor interact with the adjacent sensors. When the finger moves away from one sensor and toward the next, the interaction with the first node progressively decreases and interaction with the second node progressively increases. In this way, the position and speed of the finger can easily be determined.

Once the touch location is determined, simple and intuitive commands, with mechanical analogs can be added to a user interface. Button presses, moving dials and positioning sliders are all easily understood by the user and require little training to understand. When needed, voice prompts or on screen visuals can be used to illustrate, provide hints or otherwise, communicate the user’s options. After a few uses, the interface becomes obvious.

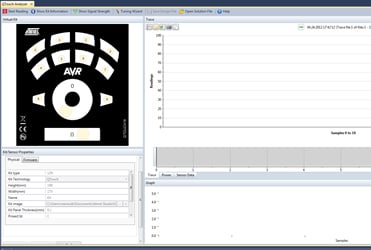

Atmel even supplies a software tool that simplifies the development of simple touch interfaces. Shown in Figure 2, the Atmel QTouch analyzer reads  Figure 2: Touch Interface Software Development with the Atmel QTouch Analyzer Figure courtesy of Atmel Corp (http://www.atmel.com/webdoc/atmelstudio/atmelstudio.AVRStudio.Extending.QTCAnalyzer.html)and interprets touch data sent from QTouch development kits during hardware development, testing and debugging. Several different views of recorded QTouch data are provided. The recorded signal strength can even be used by a tuning wizard to optimize the software settings used to calibrate QTouch parameters for the optimal settings.

Figure 2: Touch Interface Software Development with the Atmel QTouch Analyzer Figure courtesy of Atmel Corp (http://www.atmel.com/webdoc/atmelstudio/atmelstudio.AVRStudio.Extending.QTCAnalyzer.html)and interprets touch data sent from QTouch development kits during hardware development, testing and debugging. Several different views of recorded QTouch data are provided. The recorded signal strength can even be used by a tuning wizard to optimize the software settings used to calibrate QTouch parameters for the optimal settings.

Gesture-Based Interfaces

Gesture interfaces build off of the technology used for touch interfaces, but extend the capability to measure location and movement above the sensor array. As an example, Microchip Technologies GestIC technology utilizes an electric field for proximity sensing and tracking of the user’s hand  Figure 3: Gesture Recognition Technology in Action. Figure courtesy of Microchip Technology or finger motion in free space. As seen in the graphic on the top of Figure 3, electric fields are generated by electrical charges and propagate three-dimensionally around the surface, carrying the electrical charge. Applying direct voltages to an electrode results in a constant electric field, while applying alternating voltages makes the charges and thus the field vary over time. When the charge varies sinusoidally, the resulting electromagnetic wave is characterized by a wavelength and if the wavelength is much larger than the electrode geometry, the result is quasi-static electrical near-field. This static field can be used for sensing conductive objects such as the human body.

Figure 3: Gesture Recognition Technology in Action. Figure courtesy of Microchip Technology or finger motion in free space. As seen in the graphic on the top of Figure 3, electric fields are generated by electrical charges and propagate three-dimensionally around the surface, carrying the electrical charge. Applying direct voltages to an electrode results in a constant electric field, while applying alternating voltages makes the charges and thus the field vary over time. When the charge varies sinusoidally, the resulting electromagnetic wave is characterized by a wavelength and if the wavelength is much larger than the electrode geometry, the result is quasi-static electrical near-field. This static field can be used for sensing conductive objects such as the human body.

As seen in the illustration on the bottom of Figure 3, when a person’s hand or finger intrudes on the electrical field, the field becomes distorted. The field lines are drawn to the hand due to the conductivity of the human body and are shunted to ground. The three-dimensional electric field decreases locally. Changes in the electric field can be sensed by electrodes located at different positions to measure the origin of the electric field distortion. This information is used to calculate the position, track movements and to classify movement patterns into specific gestures.

Once gestures have been identified, the user interface can get to work. Each user interface has its own characteristics and requirements, but it is best to use gestures that have some intuitive meaning for the user. Standard gestures have and will continue to evolve based on common physical world uses. Figure 4 shows some common examples of typical gestures that might be included in a variety of user interfaces and many of these are quite intuitive and need little if any training. For example, a wake-up command based on an approaching gesture is clear to the user and probably need little if any ‘hints’ from the display. A wheel motion would be an obvious choice for setting a dial, while a flick would be fairly clear as a way to move between menus. A few uses of the gesture is all it takes to learn the key elements of the interface. The clear ‘iconography’ of gestures we bring with us from the real world is one of the main strengths of a gesture-based user interface, and in many cases the gesture is (fairly) universal.

Using a gesture interface in an embedded system provides additional benefits when it is inconvenient to use a touch interface. Industrial application,  Figure 4: Example Touch and Gesture Functions. Figure courtesy of Microchip Technology) http://www.microchip.com/pagehandler/en-us/technology/gestic/home.html for example, can have higher reliability when buttons, dials and even touch pads are replaced with a gesture interface. Eliminating mechanical contact, whether from a switch or a hand (or a hand in a bulky glove) can improve system lifetime and reduce service and maintenance calls. Gesture interfaces can also simplify the control of home automation, appliances and even light switches.

Figure 4: Example Touch and Gesture Functions. Figure courtesy of Microchip Technology) http://www.microchip.com/pagehandler/en-us/technology/gestic/home.html for example, can have higher reliability when buttons, dials and even touch pads are replaced with a gesture interface. Eliminating mechanical contact, whether from a switch or a hand (or a hand in a bulky glove) can improve system lifetime and reduce service and maintenance calls. Gesture interfaces can also simplify the control of home automation, appliances and even light switches.

The Future of Embedded Interfaces

Over time, gesture recognition is expected to extend its range and sensitivity so that even more real world gestures can be mapped into user interface functions. Perhaps selecting a file will simply require the finger to brush over the tops of virtual file folders, and when the desired file is found, closing the thumb and pointing finger will select it. Just raise your hand and the selected file will be pulled out and opened. Pick it up again and drop it back into the folder to re-file it. Setting a thermostat might simply require you to virtually grasp the dial, pull it out, twist it to the desired setting and pushing it back in. With fully realized virtual interfaces, the physical world will supply an array of gesture iconography. Perhaps dials will only live on as virtual icons similar to the way the telephone handset is now more often an icon on a screen than a physical device.

Get a Peak at the Future of Embedded User Interfaces

Gesture support promises to bring a variety of new and different user interface designs to embedded systems. As larger distances and finer resolution become available, control over even complex processes becomes possible, perhaps mirroring the user interfaces we see in current science fiction films. To get a jump on the state of current touch and gesture technologies, you need not resort to time travel. Instead you can attend the IHS Touch Gesture Motion and Emerging Displays Technologies Conference from Nov 12-13 in San Jose, CA. The agenda is packed with presentations, panels, exhibits and keynotes from industry and technology luminaries covering display technology, materials, algorithms, markets and cutting edge research.

To contact the author of this article, email engineering360editors@ihs.com