"Moore's Law" is one of the few phrases from the engineering and electronics domain that resonates with the general public. Although not exactly sure what it refers to, many lay people know it has something to do with the incredible and unrelenting increase in computing power along with dramatic cuts in electronics prices over the years.

This "law" has been with us for 50 years. Gordon Moore published a three-page, carefully reasoned, highly readable article in the April 19, 1965, issue of the now-defunct industry trade publication Electronics. In the article “Cramming More Components onto Integrated Circuits," Moore—then director of the research and development laboratories at Fairchild Semiconductor—made this critical yet almost understated conjecture: "…the complexity for minimum component costs has increased at a rate of roughly a factor of two per year. Certainly over the short term this rate can be expected to continue, if not to increase. Over the longer term, the rate of increase is a bit more uncertain, although there is no reason to believe it will not remain nearly constant for at least 10 years."

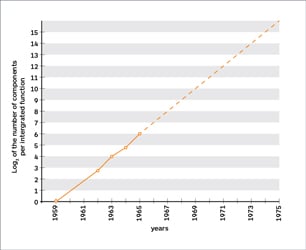

Moore proposed a logarithmic increase in device complexity vs. the linear yearly time scale. Image source: Proceedings of the IEEE, Vol. 86, No. 1, January 1998 Note that his eponymous law is not a law at all in the scientific sense; instead, it is a speculative projection based on insight and expertise. Exactly how and when Moore's original prediction of "a factor of two per year" was revised to become the better-known phrase "every two years" is a subject for debate. Whether the period of Moore's Law is 12 or 24 months, the key point of his prediction is that complexity (whether just the number of devices per chip or overall performance) will be subject to the constant exponential doubling that Moore illustrated in his article with a log-linear analysis.

Moore proposed a logarithmic increase in device complexity vs. the linear yearly time scale. Image source: Proceedings of the IEEE, Vol. 86, No. 1, January 1998 Note that his eponymous law is not a law at all in the scientific sense; instead, it is a speculative projection based on insight and expertise. Exactly how and when Moore's original prediction of "a factor of two per year" was revised to become the better-known phrase "every two years" is a subject for debate. Whether the period of Moore's Law is 12 or 24 months, the key point of his prediction is that complexity (whether just the number of devices per chip or overall performance) will be subject to the constant exponential doubling that Moore illustrated in his article with a log-linear analysis.

How closely has his prediction reflected reality? There are several ways to assess the actual state of integrated circuit (IC) complexity. For example, look at the number of transistors in successive generations of Intel microprocessors between 1970 and 2005, or use a broader measure which includes Intel and non-Intel devices from 1970 and 2011. By either measure, Moore was amazingly prescient and on target.

Moore's law is supported by the trend in the number of transistors in Intel microprocessors from 1970-2005. Image source: Intel

Moore's law is supported by the trend in the number of transistors in Intel microprocessors from 1970-2005. Image source: Intel

Moore was not just another industry pundit or commentator when he published his analysis and predictions. He was one of the eight co-founders of Fairchild Semiconductor Corp., having left Shockley Semiconductor in 1957; they were called the "traitorous eight" by transistor co-inventor William Shockley.

These men were the best and brightest in the semiconductor world with extraordinary expertise and insight in physics, chemistry, optics, manufacturing and many other academic and practical disciplines. Moore's credentials begin with degrees that include a doctorate in physical chemistry from the California Institute of Technology. He was described by the editors of Electronics in 1965 as "one of the new breed of electronic engineers, schooled in the physical sciences rather than in electronics." Moore subsequently went on to co-found Intel along with Fairchild's co-founder Robert Noyce in 1968, and was followed there by several other Fairchild co-founders. He served as chairman from 1979 to 1997, when he was given emeritus status.

Moore's law is further validated by including non-Intel CPUs and extending the timeline to 2011. Image source: WikiMedia.org Moore's conjecture in 1965 was not just one of many predictive darts thrown by the pundit community that happened to hit it right. In his article, Moore clearly and methodically addresses the characteristics and virtues of silicon ICs including yield issues, thermal limits due to increasing density, packaging concerns, relative reliability compared to discrete-transistor circuits, the differences between integration of digital and analog functions and even briefly cites non-silicon substrates such as gallium arsenide (GaAs). He weaves these pieces together in a coherent, cogent, fully articulated framework with several conclusions including the one which we now know as Moore's Law (it's not clear who first called it Moore's Law; he never did).

Moore's law is further validated by including non-Intel CPUs and extending the timeline to 2011. Image source: WikiMedia.org Moore's conjecture in 1965 was not just one of many predictive darts thrown by the pundit community that happened to hit it right. In his article, Moore clearly and methodically addresses the characteristics and virtues of silicon ICs including yield issues, thermal limits due to increasing density, packaging concerns, relative reliability compared to discrete-transistor circuits, the differences between integration of digital and analog functions and even briefly cites non-silicon substrates such as gallium arsenide (GaAs). He weaves these pieces together in a coherent, cogent, fully articulated framework with several conclusions including the one which we now know as Moore's Law (it's not clear who first called it Moore's Law; he never did).

When Moore wrote his 1965 article, the IC was less than a decade old and still crude and somewhat cantankerous; the germanium IC was invented in 1958 by Jack Kilby at Texas Instruments. A much more challenging silicon version was developed by Noyce at Fairchild in 1959. Although ICs were in limited use by 1965 in military and space programs such as the Apollo moon-landing project, they were still limited in capacity, with 64-and 256-bit memory versions (yes, that's bits, not MB or even kB).

Tools and techniques were also in their infancy, with none of the modeling, simulation or process control we now take as givens. Their layouts (known as floor plans) were drafted by hand and painstakingly cut into rubylith film (a red plastic film that was used as a photographic negative). The team at Fairchild had to design and build their own "step and repeat" resist-exposure systems using whatever optical and mechanical parts they could make work.

The End of Moore's Law?

The promulgation of Moore's law led to the much-publicized semiconductor "road map" where successive generations of IC technology are planned, announced and implemented by leading vendors with support from the industry. Huge infrastructure is needed, including the companies that make the costly, complex production and test systems as well as the ultrapure materials.

As the physical geometry of ICs has shrunk with successive stages of Moore's law and the road map has evolved from a scale measured in micrometers to hundreds of nanometers and then just tens of nanometers, some experts have said "no more" is possible. Yet at each stage, the advances in materials, processes, packaging and production (including deeper insight into device physics, three-dimensional ICs, and optics which read like science fiction) have allowed Moore's conjecture largely to remain valid.

Still, there may be an end in sight. After all, as feature sizes shrink to the size of just a few atoms, quantum effects begin to make unpredictable things happen; single-atom defects can ruin an entire circuit. Further, the extreme economics of multi-billion-dollar R&D and production cost versus product benefit and return on investment calculations may make Moore's conjecture of 50 years ago no longer a viable proposition.

Regardless, a 50-year run for a prediction in an area where nothing stays the same for long stands as an amazing accomplishment. What makes Moore's law so fascinating is that it was simple and clear when he stated it, and it proved to be remarkably accurate for five decades, all in the face of the technology's astounding complexity and rapid pace of change.