Automotive driver assistance system (ADAS) technology developer Mobileye NV has provided some details of its fourth generation collision avoidance processor family, the EyeQ4. The processor is due to be sampling in the fourth quarter of 2015, with a goal of being designed into to 2018 vehicles.

Mobileye (Jerusalem), a market leader in visual processing ADAS, staged an initial public offering in 2014. The company estimates that its products have been installed in approximately 4.5 million vehicles worldwide through September 30, 2014.

Historically, the EyeQ series processors have been designed to accept inputs from a monocular camera and measure the rate of the increase in the size of the image to anticipate a collision and initiate a warning or emergency braking. However, the EyeQ4 accepts information from multiple cameras, radars and scanning beam lasers to build up a safety "cocoon" around a vehicle as a part of a sensor fusion strategy, the company said.

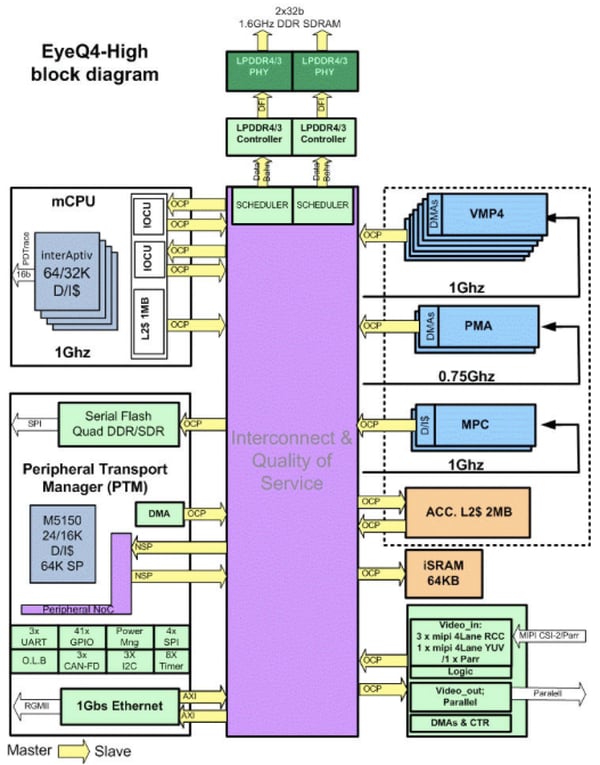

The EyeQ4 also features a number of custom processor cores introduced for the first time in the fourth generation. In total, it consists of 14 computing cores—four general-purpose multithreading CPU cores and 10 specialized vector accelerators optimized for visual processing and understanding.

Of the 10 specialized processors, six are the same Vector Microcode Processors (VMP) that have been running in the EyeQ2 and EyeQ3 generations. The EyeQ4 will see the first deployment of two Multithreaded Processing Cluster (MPC) cores and two Programmable Macro Array (PMA) cores. Mobileye claims the MPC is more versatile than any GPU applied to general-purpose computing or any other OpenCL accelerator. The company also maintains that the MPC has higher energy efficiency for the visual tasks than any CPU.

Block diagram of the EyeQ4 automotive visual processor. Source: Mobileye.

The general-purpose CPUs are 32bit InterAptiv MIPS cores licensed from Imagination Technologies plc (Kings Langley, England). The EyeQ2, launched in 2010, was based on two hyper-thread 32bit MIPS 34K cores, five Vision Computing Engines, three Vector Microcode Processors. The EyeQ2 is manufactured using 90nm CMOS technology and operates with a clock frequency of 332MHz, according to Mobileye's website. Mobileye's latest vision processor, the EyeQ3, is expected to appear in multiple launches in 2015.

The multiple cores in the EyeQ4 support different types of algorithms and overall the processor can support performance capabilities of more than 2.5 teraflops with a power budget of approximately 3W. These additional vector processors give the EyeQ4 the ability to use computer vision algorithms such as deep-layered neural networks and visual modelling while processing information from eight cameras simulataneously at 36 frames per second.

Mobileye said it is planning to introduce three variants of the EyeQ4. The basic version will support monocular processing for collision avoidance applications, in compliance with the European Union's New Car Assessment Program (NCAP), U.S. National Highway Traffic Safety Administration (NHTSA) and other regulatory requirements.

A medium version the EyeQ4M will support up to trifocal camera configuration supporting high-end customer functions including semi-autonomous driving. The fully capable EyeQH would support fusion with radars and scanning-beam lasers in the high-end customer functions. The EyeQH will have an average selling price of approximately three times that of the hobbled version of the processor, Mobileye said.

"Supporting a camera-centric approach for autonomous driving is essential as the camera provides the richest source of information at the lowest cost package," said Prof. Amnon Shashua, co-founder, chief technology officer and chairman of Mobileye, in a statement.

Shashua reaching the functionality needed for autonomous driving requires a computing infrastructure capable of processing many cameras simultaneously, extracting relevant information from each camera.

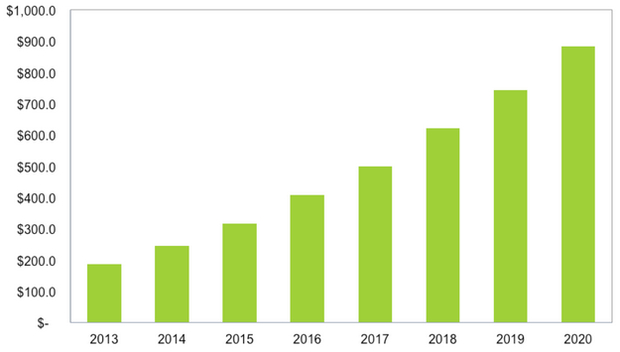

Global semiconductor revenue forecast for active-control ADAS systems (millions of US dollars). Source: IHS Technology May 2014.

The worldwide revenue for active-control systems in vehicles was $187.3 million in 2013 and $246.1 million in 2014, according to market research published in May 2014 by IHS Technology. By 2020, IHS reckons the market will have grown to $883.9 million, which represents a compound annual growth rate of 25 percent over the seven-year period.

Typically, Automatic Emergency Braking (AEB) can be implemented with a single type of sensor, according to IHS automotive analysis. However, implementation depends on the Automotive Safety Integrity Level (ASIL) being targeted. For higher levels of ASIL certification, the preferred solution is likely to be a radar sensor, backed by an optical sensor as a redundant approach.

Mobileye said it secured the first design win for EyeQ4 from a European car manufacturer, with production to start in early 2018. The EyeQ4 is expected to be available in volume in 2018.

Questions or comments on this story? Contact: peter.clarke@globalspec.com

Related links and articles:

IHS Technology MCUs and MPUs Page

IHS Automotive Technology Page

News articles:

Developer of Collision Avoidance Systems Files for IPO

Ceva IP Core Upgrades Vision Processing