Through the early part of the 21st century, artificial intelligence (AI) lay largely dormant and unexplored by industry for its use in practical applications. Compute bottlenecks were certainly a barrier to commercialization, but as Moore’s law has advanced, so has the development of machine learning (ML) and AI architectures. One area that has had trouble keeping up with the compute demands in this application is the hardware architectures used to implement ML and AI models.

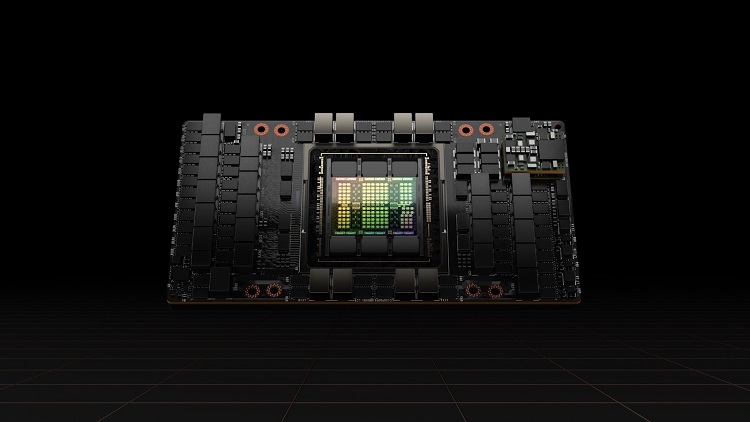

It is true that graphics processing units (GPUs) are still the main AI workhorse at the data center level. PCIe-based AI accelerators first hit the market in 2018, which basically mimicked the GPU architecture to implement tensor calculations in parallel. Since that time, the chip architectures available to support embedded AI have continued to evolve. Today, it is easier than ever to start deploying AI in the field, and the chipsets required in the future may look very different.

AI chipsets and processors over time

For a significant period of time, GPUs were the kings of embedded AI compute due to the inefficiency of using traditional processors for high-compute AI workloads. Although this dominated in data centers and some specialized applications, that quickly started to change as AI-capable hardware platforms hit the market in the late 2010s. The chipsets used in AI have continued to follow this trend, generally taking one of two forms:

- A coprocessor architecture, where AI capabilities are implemented in an external chip paired with a traditional system controller (MCU, MPU, FPGA).

- A dedicated processor that implements most or all of the required AI capabilities on the device without the need for an external accelerator.

Point #1 came first as this was the lowest barrier to entry; the chips basically interfaced with a host processor over a standard computing interface (generally PCIe). Two of the landmark products that have driven AI toward the edge and into the end device are NVIDIA’s Jetson product line, and the Google Coral AI accelerator. Other products have hit the market, with most targeting mobile or an IoT application.

Those earlier products laid the foundation for some of the newer chipsets that have hit the market in recent years, as well as exploration of ML applications that can run on a traditional MCUs. Now Point #2 is starting to materialize with some new approaches to AI compute in the semiconductor industry.

On-chip AI accelerators

Although Coral is one of the earlier known accelerator chips that could be implemented alongside a standard MCU, new heterogeneous products are coming to market that implement an on-die or in-package AI processing block. Bringing the AI accelerator block directly onto the die or into the package allows for more efficient operation in high compute scenarios. These chips are intended for inference on the end device, such as an IoT product, while training might be performed in the cloud or at the edge. Once we look at the cloud/data center level, a different hardware approach is taken for training.

In-memory compute

Perhaps the newest advance for higher compute workloads on the end device or in the data center will involve computation in the system memory. This gives the same efficiency advantage as an accelerator integrated into a die or package. The difference here is that in-memory processing provides inference directly where model definitions are stored, so it makes sense to perform inference tasks there as well. This is really a form of external acceleration.

FPGA AI accelerators

A field-programmable gate array (FPGA) is an excellent choice for implementing an AI application for an embedded system as long as the developer can fully optimize the application code to ensure efficient compute. FPGAs come with other benefits as well, namely their reconfigurability. FPGAs also provide some level of security that is highly desirable in aerospace settings, and FPGA vendors can help instantiate many of the required interfaces in these systems through their IP portfolios.

The advantages of FPGAs are not lost on semiconductor companies that are better known for their traditional compute products. AMD recently announced that it will now incorporate an FPGA block onto their EPYC processor line specifically to support on-chip AI applications. This follows the same track as IoT SoCs with on-chip AI accelerators: supporting customization with more compute in smaller footprints.

RISC-V processors

It’s always been assumed that standard computing architectures (Arm and x86) would never be able to implement AI computation efficiently for any high compute workloads. Some companies are hoping RISC-V will change that dynamic.

RISC-V is an open-source instruction set architecture (ISA) for development of processors based on reduced instruction set principles. Although the architecture was introduced in 2010, the major growth in adoption in recent years is largely driven by AI implementation on new processors, ranging from FPGAs to custom SoCs and accelerators.

This open-source ISA has been so successful that the biggest semiconductor names are targeting it for use in their newest AI processor or accelerator products. Microchip provides support for RISC-V implementation in their PolarFire FPGA SoCs, and Intel is targeting the use of RISC-V for Level 4 autonomous driving with their upcoming Mobileye EyeQ Ultra platform.

Reimagining transistors as analog elements

Around 2020, some academic research groups and a few startups were implementing custom transistor architectures for their on-die accelerator blocks. Essentially, this requires running the transistors in the AI block as analog components operating in the linear range and implementing an analog computing approach that mimics the weightings between neurons in a neural network. The result is faster computation in a smaller footprint because numerical values can be explicitly represented as an analog quantity in a single transistor rather than using multiple transistors to represent binary data.

Whether this approach takes hold and becomes commercialized at a broad scale is debatable. At the moment, established semiconductor companies and startups are working hard to adapt the best-in-class products to support more efficient AI computation, rather than taking the analog approach. Even as chips into new technology nodes and feature densities increase, expect the chipsets targeting advanced applications like AI to continue evolving.