Researchers are developing robots to become more integrated into human life every day. Robots are still almost completely dependent on humans for their thoughts and plans (except, of course, Sophia, who is now a Saudi Arabian citizen). Now, research teams from Brown University and MIT are attempting to help robots think and plan abstractly.

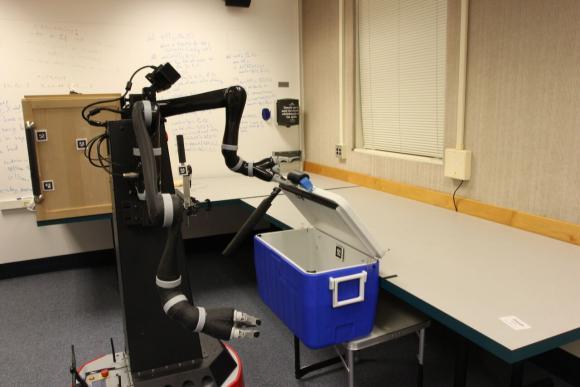

New research shows that robots can learn abstract representations of the world that are useful in planning for multi-step tasks, something that's monumentally difficult for robots to do. Here, a robot learns useful abstractions about the world by executing a set of motor skills. (Source: Intelligent Robot Lab / Brown University)

New research shows that robots can learn abstract representations of the world that are useful in planning for multi-step tasks, something that's monumentally difficult for robots to do. Here, a robot learns useful abstractions about the world by executing a set of motor skills. (Source: Intelligent Robot Lab / Brown University)

The team has developed robots that can plan multi-step tasks through abstract representations in the world. These robots are a step closer to robots that can think like people.

Planning has proven difficult for robots because they don’t have the thought ability to interact with the world outside of their programming. Robots see the world's simple pixels portrayed by their camera. Along with this, the ability to act is limited to the capabilities of the gears, joints and parts that make up the robot. Because of this, robots are limited in their interactions and understanding of what is around them.

"That low-level interface with the world makes it really hard to do decide what to do," said George Konidaris, an assistant professor of computer science at Brown and the lead author of the new study. "Imagine how hard it would be to plan something as simple as a trip to the grocery store if you had to think about each and every muscle you'd flex to get there and imagine in advance and in detail the terabytes of visual data that would pass through your retinas along the way. You'd immediately get bogged down in the detail. People, of course, don't plan that way. We're able to introduce abstract concepts that throw away that huge mass of irrelevant detail and focus only on what is important."

It’s not just basic robots that are affected by this, even state of the art robots lack understanding of the world and cannot plan their interactions. There are robots that have the ability to perform tasks that take multiple steps, but there is usually a human programmer behind the robot, spoon feeding it what to do. For robots to be fully autonomous, they need to beat this problem and be able to learn on their own.

To develop robots that have more autonomous thinking, the research teams focused on one of the two categories of abstractions -- perceptual abstraction. Procedural abstractions, programs made out of low-level movements combined to make higher-level skills, have been thoroughly studied. Meanwhile, perceptual abstraction, which helps robots figure out their surroundings, has far less research behind it, so the researchers turned their focus to this category.

"Our work shows that once a robot has high-level motor skills, it can automatically construct a compatible high-level symbolic representation of the world -- one that is probably suitable for planning using those skills," Konidaris said.

The research teams used a robot named Ana (Anathema Device). They sent the robot into a room that had a cupboard, cooler, one switch that controls the cupboard light and a bottle. The goal was to send Ana into the room and have her manipulate the objects in the room. Beforehand, they gave her a high-level set of motor skills that would allow her to manipulate the objects. Then they sent Ana into the room on her own and recorded sensory data before and after she operated. They then loaded this data into a machine-learning algorithm.

The testing showed that Ana could learn to abstractly describe the room when there were only the items in the room that Ana needed to complete a skill. She could learn to open a cooler, but she needed to be standing directly in front of it without holding anything. She was only loaded with the ability to open the cooler, but through the environment, she learned how to close it.

"These were all the important abstract concepts about her surroundings," Konidaris said. "Doors need to be closed before they can be opened. You can't get the bottle out of the cupboard unless it's open, and so on. And she was able to learn them just by executing her skills and seeing what happens."

After she proved that she could learn about the environment, the research team asked Ana to plan something. In this case, they asked her to take a bottle from the cooler and put it in the cupboard. Ana was able to successfully complete this task.

“We didn't provide Ana with any of the abstract representations she needed to plan for the task," Konidaris said. "She learned those abstractions on her own, and once she had them, planning was easy. She found that plan in only about four milliseconds."

This is a huge development in adding artificial intelligence to robotics.

"We believe that allowing our robots to plan and learn in the abstract rather than the concrete will be fundamental to building truly intelligent robots," said Konidaris.

This research was published in the Journal of Artificial Intelligence.