TeraDeep Inc. (Santa Clara, Calif.) was formed in 2013 as a spin off from Purdue University (West Lafayette, Indiana) to commercialize research into multilayer convolutional neural networks as a means of efficient processing for such tasks as cognitive vision processing.

The company has developed an FPGA-based hardware module to show off the capabilities of such networks. It calls this the nn-X processor and it is pitching the technology as something that could be added to mobile phones, tablet computers and wearable equipment to perform image recognition and scene classification without flattening the batteries.

Potential applications for such hardware-based networks are clearly much broader – for example in classifying medical images or driver assistance systems – but on its website the company states it is here to help cell phones, mobile and wearable devices see the world as human beings do. Users are already storing thousands of photographs on smartphones and there is an argument that wearable items such as Google Glasses could benefit from a neural networking approach to classifying and searching the large amounts of visual data that they could acquire.

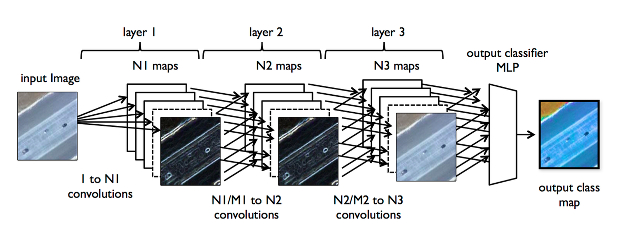

Networks are composed of multiple layers where each layer performs a function. Source: TeraDeep.

The company is pursuing a intellectual property licensing business model, according to reports that quote company co-founder Eugenio Culurciello, an associate professor in Purdue University's Weldon School of Biomedical Engineering and the Department of Psychological Studies.

TeraDeep will likely want to demonstrate its technology in FPGA form, and possibly in a custom-designed system-chip, and then go on to license variants of its nn-X processor to companies such as Qualcomm, Apple and Samsung for inclusion in their application processors.

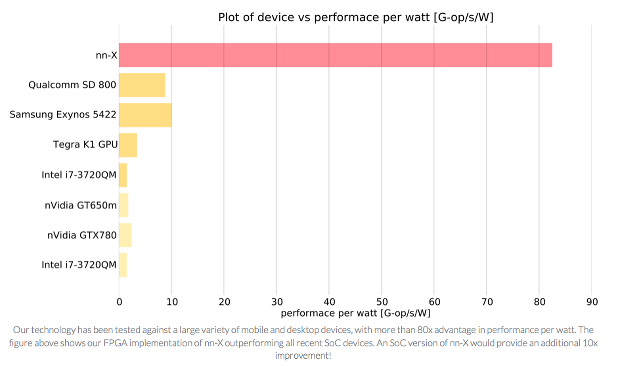

The company has results data on its website wherein it claims that running its neural network technology on FPGA is 8 times more power efficient than on a Exynos 5422 or Snapdragon 800 applications processor. Hosting the nn-X on a custom SoC would provide a further 10-fold improvement in efficiency, allowing 80x improvement on a native application processor, the company states.

And Qualcomm and Intel have both already declared an interest in adding neural network accelerators to their conventional software programmable processors (see Intel Follows Qualcomm Down Neural Network Path). Qualcomm is working with a San Diego based research-oriented company called Brain Corp. and its so-called Zeroth processor is due to be released to researchers and software developers some time in 2014.

FPGA implementation of nn-X outperfroms same functionality running in software on application processors. Source: TeraDeep.

Didier Lacroix, who co-founded TeraDeep with Culurciello, declined to answer specific questions about the company saying: "We will be ready to reveal our company plans at the same time we roll out our first product." Culurciello is described as the "leader" of TeraDeep on its website while semiconductor industry veteran Lacroix is described as "business leader." Lacroix did tell Electronics360 that TeraDeep is self-funded to date.

From TeraDeep and Purdue presentations it is known that the nn-X processor is designed to connect to an ARM processor and is made up of multiple accelerators that perform the typical operations in deep neural networks and scene understanding. It has been implemented on Zedboard and ZC706 development kits for the Zynq 7000 series chips that include ARM cores and FPGA fabric.

There is a single software developement kit for nn-X that operates from servers to cell-phones and the neural networks run on an intermediate open source software layer called Torch. Torch7 is a computing framework with support for machine learning algorithms and neural networks in particular which comes with a scripting language called LuaJIT and an underlying C language implementation.

Research findings were presented in a poster paper during the Neural Information Processing Systems conference held in Nevada in December 2013.

"Now we have an approach for potentially embedding this capability onto mobile devices, which could enable these devices to analyze videos or pictures the way you do now over the Internet," Culurciello said, in a news release posted on the Purdue University website in March.

Related links and articles:

News articles:

Intel Follows Qualcomm Down Neural Network Path

Qualcomm Working on Neural Processor Core