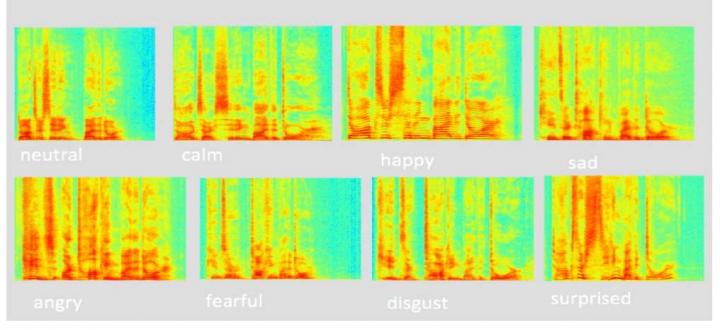

Spectrograms of the phrase 'Kids are talking by the door' pronounced with different emotions. Source: The Higher School of Economics

Spectrograms of the phrase 'Kids are talking by the door' pronounced with different emotions. Source: The Higher School of Economics

Researchers from the Faculty of Informatics, Mathematics and Computer Science at the Higher School of Economics have created an automatic system that is capable of identifying emotions based on the sound of a human voice.

Computers have successfully converted speech into text. But the emotional component, which is important for conveying meaning, has been neglected. The reactions to a simple question, like “Is everything okay?” and the emotions in the answer “Of course it is!” can range from calm to cheerful.

Neural networks are processors connected with each other and are capable of learning, analysis and synthesis. The smart system surpasses traditional algorithms in that exchange between a person and computer and it becomes more interactive.

HSE researchers Anastasia Popova, Alexander Rassadin and Alexander Ponomarenko have trained a neural network to recognize eight different emotions: neutral, calm, happy, sad, angry, scared, disgusted and surprised. In 70 percent of cases, the computer identified the emotion correctly, according to the researchers.

The researchers have transformed the sound into images called spectrograms, which allowed them to work with sound using the methods applied for image recognition. A deep learning convolutional neural network with VGG-16 architecture was used in the research.

The researcher says the program successfully distinguishes neutral and calm tones, but happiness and surprise are not always recognized. Happiness is often perceived as fear and sadness, and surprise is interpreted as disgust.

The paper on this research was presented at Neuroinformatics 2017 and can be accessed here.