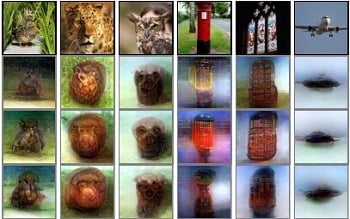

Three reconstructed images correspond to reconstructions from three subjects. Source: Kamitani Lab/Kyoto University

Three reconstructed images correspond to reconstructions from three subjects. Source: Kamitani Lab/Kyoto University

Can a computer decipher your thoughts? A deep image reconstruction technique developed by Kyoto University researchers visualizes perceptual content from human brain activity. Unlike previously tested systems based on binary pixels, this deep neural network provides the ability to decode a more sophisticated set of images from three different categories: natural phenomena (such as animals or people), artificial geometric shapes and letters of the alphabet for varying lengths of time.

Participants’ brain activity was measured either while they were looking at the images or afterward. To measure brain activity after the images were viewed, participants were simply asked to think about the images they’d been shown. Recorded activity was then fed into a neural network that “decoded” the data and used it to generate its own interpretations of the peoples’ thoughts.

Activity in the visual cortex was measured using functional magnetic resonance imaging (fMRI), which is translated into hierarchical features of a deep neural network. The system repeatedly optimizes an image’s pixel values until the neural network’s features of the input image approach those decoded from brain activity.

The model wasn’t as successful in decoding brain activity when people were asked to remember images, as compared to activity when directly viewing images. The reconstructed images retain some resemblance to the originals viewed by participants, but largely appear as minimally-detailed blobs. However, the results suggest that hierarchical visual information in the brain can be effectively combined to reconstruct perceptual and subjective images. The technology may provide a new opportunity to make use of the information from hierarchical visual features.